Dead Cells:Unlocking Daily Challenge blueprints

The Daily Challenge mode boasts a significant player base, even compared to other popular modes, and offers several exclusive weapons to boot. However, this is bad news for us freeloaders who, through various means, gained access to the game without ever paying the publisher a single cent. Some may consider piracy bad practice, but to be honest, I’m broke as f*ck.

Since we can’t do the challenge runs like the normal person would, I find it not much of a stretch to hack the game a bit further.

Overview

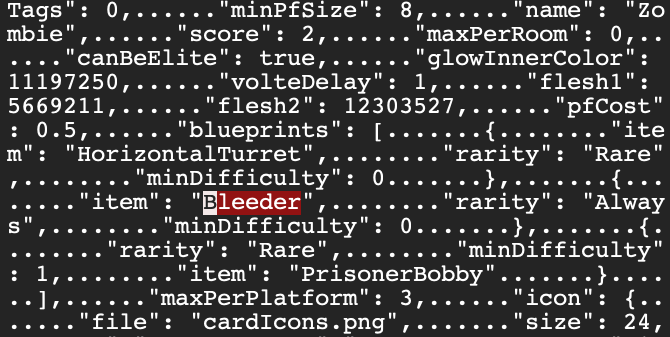

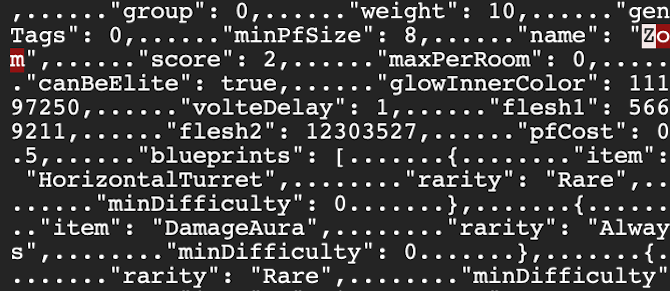

The approach we’re taking is to assign the blueprints to a chosen enemy. Naturally we’d want to assign it to an enemy we frequently run into and have a decent drop rate. It so happens that the zombie fits our description perfectly.

Let us have a look at the relevant part of its JSON description:

1 | "name": "Zombie", |

Bleeder is the internal name for Blood Sword, which is typically the first blueprint obtained by Dead Cells players on their initial run. As such, it can be readily exchanged for an item of our preference.

Blood Sword

Targets:

- Swift Sword (internal name

SpeedBlade) - first run - Lacerating Aura (internal name

DamageAura) - 5th run - Meat Skewer (internal name

DashSword) - 10th run

Visit the official wiki page for more information!

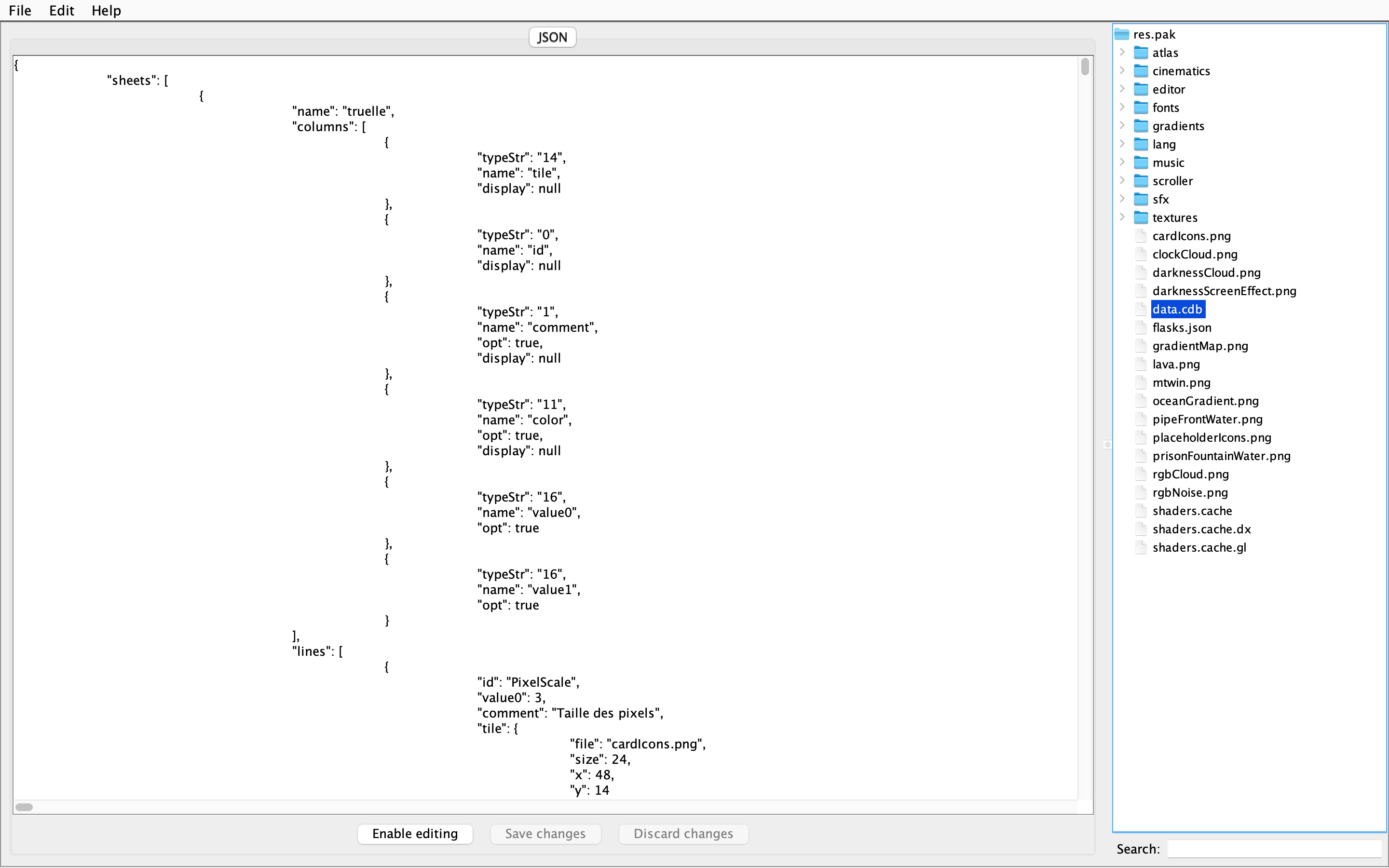

CellPacker

I use CellPacker to extract the data.cdb file so as to avoid having to read from the hexdump. It is a lot more comfortable to inspect an formatted JSON file!

CellPacker

We can open CellPacker by double-clicking CellPacker.jar, however I suggest running the following command to avoid headaches:

1 | java -jar /path/to/CellPacker.jar |

GitHub Repo: ReBuilders101/CellPacker

Click here to install CellPacker.jar.

res.pak

As the name suggests, the res.pak is the resource pack for the game itself and contains every aspect of what the graphics and cutscenes require to load. It also contains the json data files which store the underlying logic behind the interactions in the game.

To hack it, we need a hexdump editor. I use ghex because it’s fairly simple and easy to use. We can install it on our MacBook via Homebrew, using the following command:

1 | brew install ghex |

Note:

Before we begin the actual editing process, make sure you have a copy of your game saves and the resource pack we’re editing on. This is in case we make a mistake and corrupt the files.

ghex

Run ghex in Terminal and open res.pak. Locate Bleeder and replace it with the internal name of any of the three daily challenge blueprints.

Please note that the resulting file must remain the same size as the original. Making significant changes will only complicate matters. For instance, Bleeder has 7 characters, while SpeedBlade and DamageAura have 10, and DashSword has 9. To address this discrepancy, I reduced the length of “Zombie” by the difference. Here’s an example:

References

- Sebadorn’s Blog: 2021.06.28